Neuromorphic Computing "Roadmap" Envisions Analog Path to Simulating Human Brain

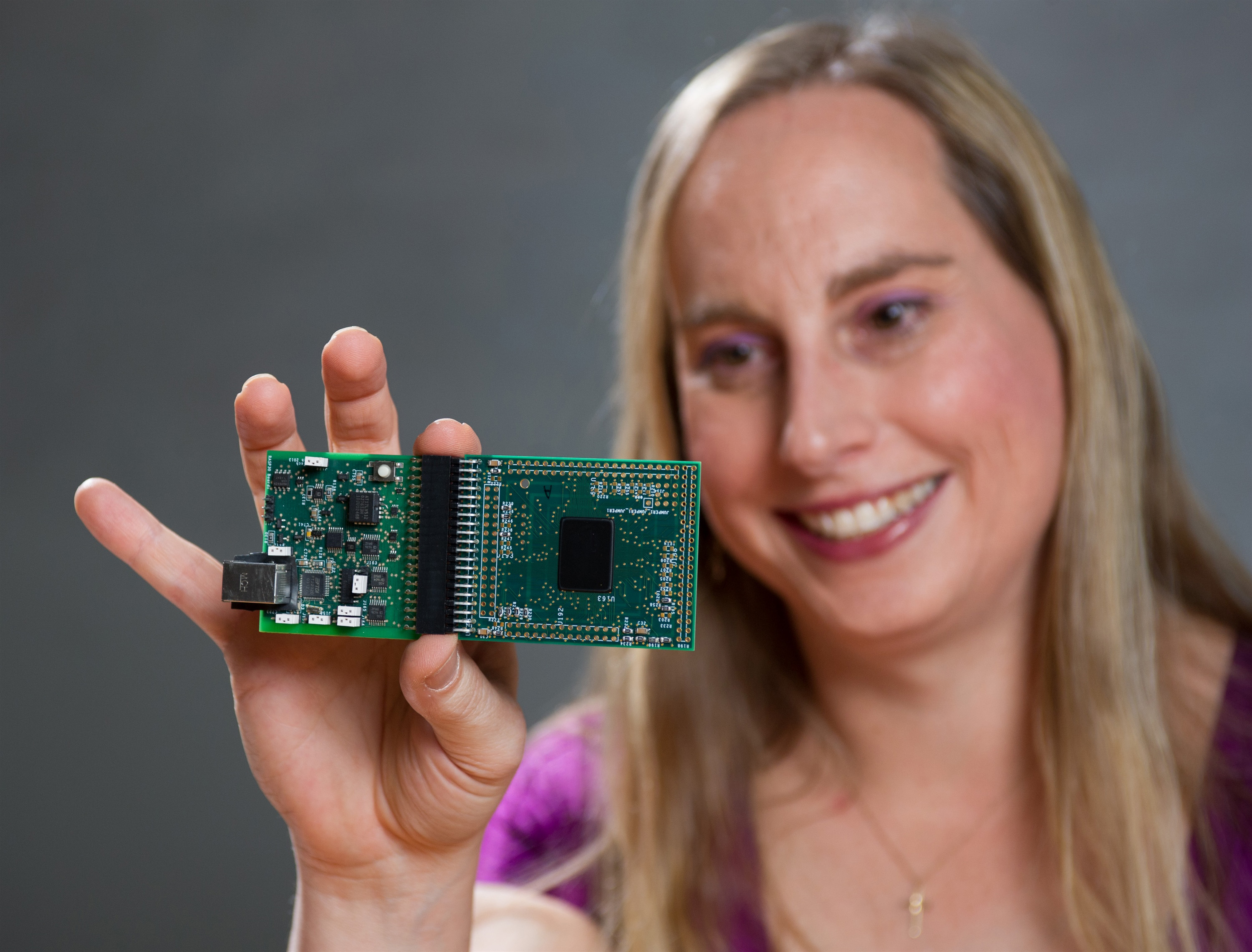

Professor Jennifer Hasler displays a field programmable analog array (FPAA) board that includes an integrated circuit with biological-based neuron structures for power-efficient calculation. Hasler’s research indicates that this type of board, which is programmable but has low power requirements, could play an important role in advancing neuromorphic computing. (Georgia Tech Photo: Rob Felt)

In the field of neuromorphic engineering, researchers study computing techniques that could someday mimic human cognition. Electrical engineers at the Georgia Institute of Technology recently published a "roadmap" that details innovative analog-based techniques that could make it possible to build a practical neuromorphic computer.

A core technological hurdle in this field involves the electrical power requirements of computing hardware. Although a human brain functions on a mere 20 watts of electrical energy, a digital computer that could approximate human cognitive abilities would require tens of thousands of integrated circuits (chips) and a hundred thousand watts of electricity or more – levels that exceed practical limits.

The Georgia Tech roadmap proposes a solution based on analog computing techniques, which require far less electrical power than traditional digital computing. The more efficient analog approach would help solve the daunting cooling and cost problems that presently make digital neuromorphic hardware systems impractical.

"To simulate the human brain, the eventual goal would be large-scale neuromorphic systems that could offer a great deal of computational power, robustness and performance," said Jennifer Hasler, a professor in the Georgia Tech School of Electrical and Computer Engineering (ECE), who is a pioneer in using analog techniques for neuromorphic computing. "A configurable analog-digital system can be expected to have a power efficiency improvement of up to 10,000 times compared to an all-digital system."

Hasler and a former student recently published a detailed plan that describes the development of computer systems capable of human-like cognition. The paper, "Finding a Roadmap to Achieve Large Neuromorphic Hardware Systems" by Hasler and Bo Marr, was published in the September 2013 edition of the journal Frontiers in Neuroscience.

"To my knowledge, this is the first time a detailed neuromorphic roadmap has been attempted," said Hasler. "We describe specific computational techniques could offer real progress in neuromorphic systems."

Unlike digital computing, in which computers can address many different applications by processing different software programs, analog circuits have traditionally been hard-wired to address a single application. For example, cell phones use energy-efficient analog circuits for a number of specific functions, including capturing the user's voice, amplifying incoming voice signals, and controlling battery power.

Because analog devices do not have to process binary codes as digital computers do, their performance can be both faster and much less power hungry. Yet traditional analog circuits are limited because they're built for a specific application, such as processing signals or controlling power. They don't have the flexibility of digital devices that can process software, and they're vulnerable to signal disturbance issues, or noise.

In recent years, Hasler has developed a new approach to analog computing, in which silicon-based analog integrated circuits take over many of the functions now performed by familiar digital integrated circuits. These analog chips can be quickly reconfigured to provide a range of processing capabilities, in a manner that resembles conventional digital techniques in some ways.

Over the last several years, Hasler and her research group have developed devices called field programmable analog arrays (FPAA). Like field programmable gate arrays (FPGA), which are digital integrated circuits that are ubiquitous in modern computing, the FPAA can be reconfigured after it's manufactured – hence the phrase "field-programmable."

Hasler and Marr's 29-page paper traces a development process that could lead to the goal of reproducing human-brain complexity. The researchers investigate in detail a number of intermediate steps that would build on one another, helping researchers advance the technology sequentially.

For example, the researchers discuss ways to scale energy efficiency, performance and size in order to eventually achieve large-scale neuromorphic systems. The authors also address how the implementation and the application space of neuromorphic systems can be expected to evolve over time.

"A major concept here is that we have to first build smaller systems capable of a simple representation of one layer of human brain cortex," Hasler said. "When that system has been successfully demonstrated, we can then replicate it in ways that increase its complexity and performance."

Among neuromorphic computing's major hurdles are the communication issues involved in networking integrated circuits in ways that could replicate human cognition. In their paper, Hasler and Marr emphasize local interconnectivity to reduce complexity. Moreover, they argue it's possible to achieve these capabilities via purely silicon-based techniques, without relying on novel devices that are based on other approaches.

Commenting on the recent publication, Alice C. Parker, a professor of electrical engineering at the University of Southern California, said, "Professor Hasler's technology roadmap is the first deep analysis of the prospects for large scale neuromorphic intelligent systems, clearly providing practical guidance for such systems, with a nearer-term perspective than our whole-brain emulation predictions. Her expertise in analog circuits, technology and device models positions her to provide this unique perspective on neuromorphic circuits."

Eugenio Culurciello, an associate professor of biomedical engineering at Purdue University, commented, "I find this paper to be a very accurate description of the field of neuromorphic data processing systems. Hasler's devices provide some of the best performance per unit power I have ever seen and are surely on the roadmap for one of the major technologies of the future."

Said Hasler: "In this study, we conclude that useful neural computation machines based on biological principles – and potentially at the size of the human brain -- seems technically within our grasp. We think that it's more a question of gathering the right research teams and finding the funding for research and development than of any insurmountable technical barriers."

Research News

Georgia Institute of Technology

177 North Avenue

Atlanta, Georgia 30332-0181 USA

Media Relations Contacts: John Toon (jtoon@gatech.edu) (404-894-6986) or Brett Israel (brett.israel@comm.gatech.edu) (404-385-1933).

Writer: Rick Robinson